This post was originally called Digital Power Transmission. It began with content about the use of artificial intelligence (AI) to find faults in electrical power assets in Kansas and Missouri. That introduction became ancient history on 2023-10-26, when I read an article about pigeons using the same approach as AI to problem solving. I wondered if pigeons would make AI more understandable.

So, now this post begins with a scientific study of pigeons!

Pigeons

Columba livia domestica, has been found in records that are 5 000 years old. Its domestication is far older, possibly stretching back 10 000 years. Among pigeons that are bred specifically for particular attributes, homing pigeons are bred for navigation and speed.

Pigeons are able to acquire orthographic processing skills = the use of visually represented words/ symbols, and basic numerical skills equivalent to those shown in primates.

In Project Sea Hunt, a US coast guard search and rescue project in the 1970s/1980s, pigeons were shown to be more effective than humans in spotting shipwreck victims at sea.

A study was undertaken at the University of Iowa, by Brandon Turner, lead author, a professor of psychology, and Edward Wasserman, co-author and a professor of experimental psychology. 24 pigeons were given a variety of visual tasks, some of which they learned to categorize in a matter of days, and others in a matter of weeks. The researchers found evidence that the mechanism pigeons use to make correct choices is similar to that AI models use to make predictions. Using AI-speak, nature has created an algorithm that is highly effective in learning very challenging tasks, not necessarily fast, but with consistency.

On a screen, pigeons were shown different stimuli, like lines of different width, placement and orientation, as well as sectioned and concentric rings. Each bird had to peck a button on the right or left to decide which category they belonged to. If they got it correct, they got a food pellet; if they got it wrong, they got nothing.

Pigeons learn through trial and error. With simple tasks, pigeons improved their ability to make right choices from 55% to 95% of the time. With more complex challenges, accuracy increased from 55% to 68%.

In an AI model, the main goal is to recognize patterns and make decisions. Pigeons do the same. Learning from the consequences of being given a food pellet (or not), they show a remarkable ability to correct their errors. Similarity function is also at play for pigeons, by using their ability to find resemblance between two objects.

Those two mechanisms alone, can be used to define a neural network = an AI-machine that solves categorization problems.

Back to the original content, Digital Power Transmission

Now, this post will examine the use of AI, and other digital technologies, in electrical energy transmission. Sometimes one has to venture outside of one’s backyard, to gain new insights. Today, the focus is on the Kansas and Missouri. More than four percent of this blog’s readers have roots in Kansas, in Leavenworth and Riley counties, making it one of the “big six” American states. The others being (in alphabetical order) Arizona, California, Michigan, New Hampshire and Washington. Yes, this weblog does have American content, because – sometimes – Americans are at the forefront.

Much of the initial work into the use of AI in grid management was done by Argonne National Laboratory, of Lemont, Illinois. After conducting AI grid studies, they stated that: “In a region with 1 000 electric power assets, such as generators and transformers, an outage of just three assets can produce nearly a billion scenarios of potential failure.” The calculation actually being: 1 000 x 999 x 998 = 997 002 000, which is close enough to a billion, for most people.

The Norwegian company, eSmart Systems, with its headquarters in Halden, bordering Sweden, in south-eastern Norway, provides AI based solutions for the inspection and maintenance of critical infrastructure related to electrical power generation and distribution.

Note: the term, asset, as used here, generally refers to a large structure, such as a electrical power generating station, or a substation, that transforms voltages (and amperages). For me, an asset will always be an accounting term, associated with the credit (left) side of a balance sheet, in contrast to a liability on the debit (right) side. My preferred terminology would be structure, works or plant.

eSmart

In this project eSmart will act as project management lead alongside engineering consultants EDM International, Inc. of Fort Collins, Colorado and GeoDigital, of Sandy Springs – near Atlanta – Georgia. Together, these will provide large-scale data acquisition and high-resolution image processing.

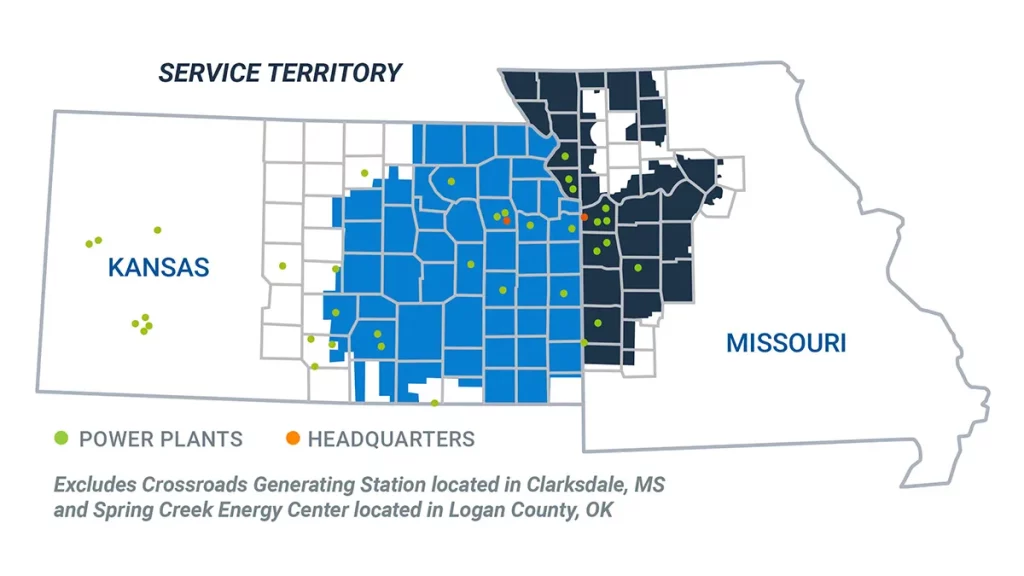

eSmart Systems is working with Evergy, a Topeka, Kansas based electric utility company that serves more than 1.6 million customers in Kansas and Missouri, to digitize Evergy’s power transmission system. It is also working with Xcel Energy, based in Minneapolis, Minnesota, and an unnamed “major public utility in the Southeast” of the United States.

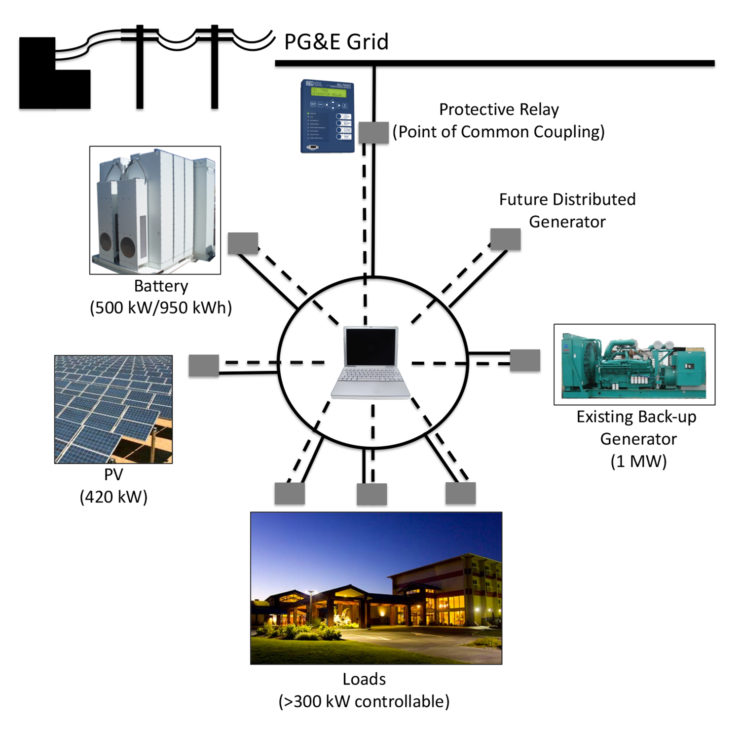

Grid Vision tracks the performance of ongoing inspection work, provides instant insight of the location and severity of verified high-priority defects, and provides utility managers and analysts a deep and flexible framework for further asset intelligence.

The three-and-a-half-year-long Evergy project will improve reliability and resiliency of over 14 000 km of Evergy’s power transmission system by using Grid Vision to create a digital inventory of its assets, accelerating image analysis capabilities, and improving inspection accuracy by using AI combined with virtual inspections. The expected result is a significant cost reduction for inspections, maintenance and repairs.

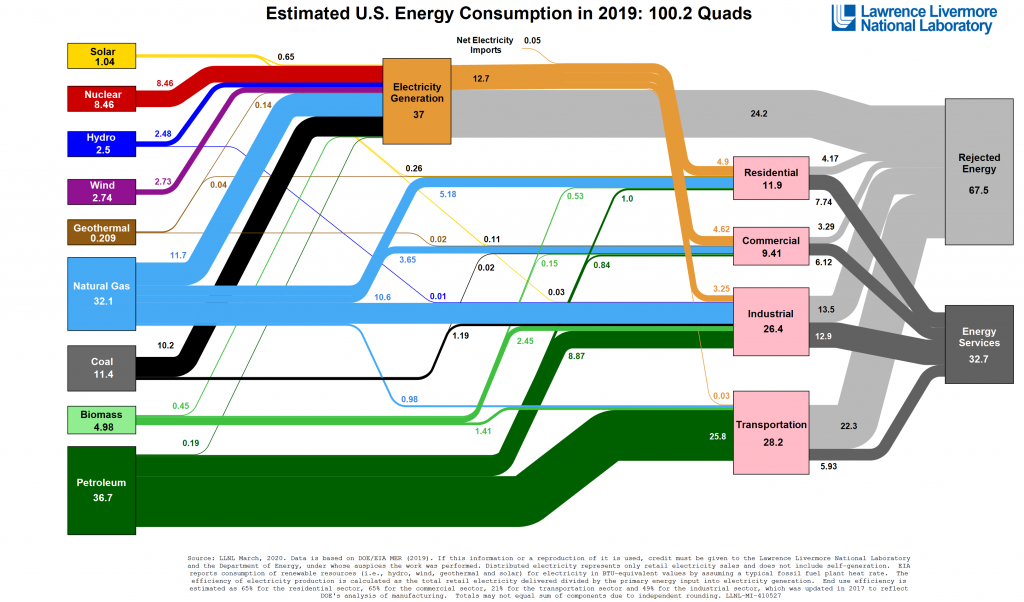

There is a need for a dynamic energy infrastructure to ensure efficient, safe and reliable operations. AI, and especially machine learning, are increasingly used as tools to improve the reliability of high-voltage transmission lines. In particular, they can allow a grid to transition away from fossil and nuclear sources to more variable sources, such as solar and wind. This will become increasingly more important for several reasons. Extreme weather will offer increasingly more challenging operations, and the grid will have to support an increasing number of electric vehicles.

The vast number of choices means that random choices cannot be relied upon to provide results when facing multiple failures. Some form of intelligence is needed, human or machine, real or artificial, if problems are to be resolved quickly.

Wind and solar generation

Kansas state senator Mike Thompson (R-Shawnee), is a former meteorologist, who is currently chair of the Kansas Senate Utilities Committee. He has introduced bill SB 279, “Establishing the wind generation permit and property protection act and imposing certain requirements on the siting of wind turbines.” This bill would require wind and solar farms to be built on land zoned for industrial use. The problem with this proposal is that half of Kansas’ 105 counties are unzoned. These counties that want wind or solar energy would have to be zoned as industrial.

The Annual Economic Impacts of Kansas Wind Energy Report 2020, reports that wind energy is the least expensive energy source, providing 22 000 jobs (directly and indirectly). After Iowa, Kansas ranks second in the US for wind power, contributing 44% of Kansas’s electricity net generation.

Typically, there are two reasons for objections to wind and solar power. First, some people have an economic connection with fossil fuels. Second, and especially for wind, they don’t like their visual and aural impact on the environment.

Another source of conflict is aboriginal rights. This topic will be covered in an upcoming but unscheduled post, Environmental Racism.